目标检测算法改进系列之Backbone替换为LSKNet

作者:小教学发布时间:2023-09-28分类:程序开发学习浏览:253

导读:LSKNetLargeSelectiveKernelNetwork(LSKNet)可以动态地调整其大空间感受野,以更好地建模遥感场景中各种物体的测距的场景。据我们所知,这...

LSKNet

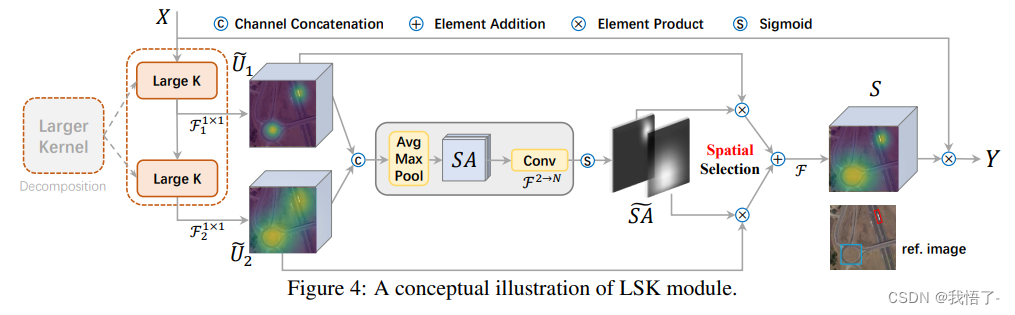

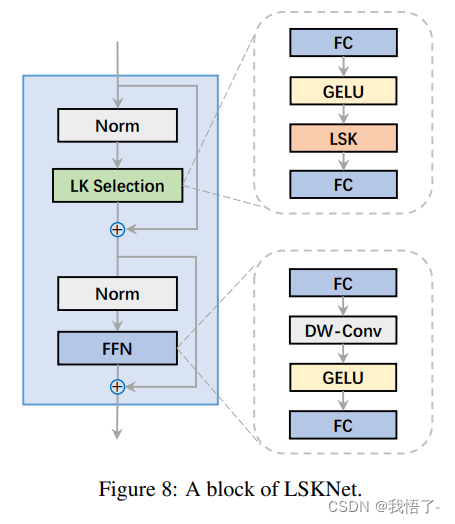

Large Selective Kernel Network(LSKNet)可以动态地调整其大空间感受野,以更好地建模遥感场景中各种物体的测距的场景。据我们所知,这是首次在遥感物体检测领域探索大选择性卷积核机制的工作。在没有任何附加条件的情况下,我们LSKNet比主流检测器轻量的多,而且在多个数据集上刷新了SOTA!HRSC2016(98.46% mAP)、DOTA-v1.0(81.64% mAP)和FAIR1M-v1.0(47.87% mAP)。

论文地址:Large Selective Kernel Network for Remote Sensing Object Detection

添加LSKNet定义文件

添加路径:ultralytics/nn/backbone/lsknet.py

代码如下:

import torch

import torch.nn as nn

from torch.nn.modules.utils import _pair as to_2tuple

from timm.layers import DropPath, to_2tuple

from functools import partial

import numpy as np

__all__ = 'lsknet_t', 'lsknet_s'

class Mlp(nn.Module):

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Conv2d(in_features, hidden_features, 1)

self.dwconv = DWConv(hidden_features)

self.act = act_layer()

self.fc2 = nn.Conv2d(hidden_features, out_features, 1)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.dwconv(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

class LSKblock(nn.Module):

def __init__(self, dim):

super().__init__()

self.conv0 = nn.Conv2d(dim, dim, 5, padding=2, groups=dim)

self.conv_spatial = nn.Conv2d(dim, dim, 7, stride=1, padding=9, groups=dim, dilation=3)

self.conv1 = nn.Conv2d(dim, dim//2, 1)

self.conv2 = nn.Conv2d(dim, dim//2, 1)

self.conv_squeeze = nn.Conv2d(2, 2, 7, padding=3)

self.conv = nn.Conv2d(dim//2, dim, 1)

def forward(self, x):

attn1 = self.conv0(x)

attn2 = self.conv_spatial(attn1)

attn1 = self.conv1(attn1)

attn2 = self.conv2(attn2)

attn = torch.cat([attn1, attn2], dim=1)

avg_attn = torch.mean(attn, dim=1, keepdim=True)

max_attn, _ = torch.max(attn, dim=1, keepdim=True)

agg = torch.cat([avg_attn, max_attn], dim=1)

sig = self.conv_squeeze(agg).sigmoid()

attn = attn1 * sig[:,0,:,:].unsqueeze(1) + attn2 * sig[:,1,:,:].unsqueeze(1)

attn = self.conv(attn)

return x * attn

class Attention(nn.Module):

def __init__(self, d_model):

super().__init__()

self.proj_1 = nn.Conv2d(d_model, d_model, 1)

self.activation = nn.GELU()

self.spatial_gating_unit = LSKblock(d_model)

self.proj_2 = nn.Conv2d(d_model, d_model, 1)

def forward(self, x):

shorcut = x.clone()

x = self.proj_1(x)

x = self.activation(x)

x = self.spatial_gating_unit(x)

x = self.proj_2(x)

x = x + shorcut

return x

class Block(nn.Module):

def __init__(self, dim, mlp_ratio=4., drop=0.,drop_path=0., act_layer=nn.GELU, norm_cfg=None):

super().__init__()

self.norm1 = nn.BatchNorm2d(dim)

self.norm2 = nn.BatchNorm2d(dim)

self.attn = Attention(dim)

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)

layer_scale_init_value = 1e-2

self.layer_scale_1 = nn.Parameter(

layer_scale_init_value * torch.ones((dim)), requires_grad=True)

self.layer_scale_2 = nn.Parameter(

layer_scale_init_value * torch.ones((dim)), requires_grad=True)

def forward(self, x):

x = x + self.drop_path(self.layer_scale_1.unsqueeze(-1).unsqueeze(-1) * self.attn(self.norm1(x)))

x = x + self.drop_path(self.layer_scale_2.unsqueeze(-1).unsqueeze(-1) * self.mlp(self.norm2(x)))

return x

class OverlapPatchEmbed(nn.Module):

""" Image to Patch Embedding

"""

def __init__(self, img_size=224, patch_size=7, stride=4, in_chans=3, embed_dim=768, norm_cfg=None):

super().__init__()

patch_size = to_2tuple(patch_size)

self.proj = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size, stride=stride,

padding=(patch_size[0] // 2, patch_size[1] // 2))

self.norm = nn.BatchNorm2d(embed_dim)

def forward(self, x):

x = self.proj(x)

_, _, H, W = x.shape

x = self.norm(x)

return x, H, W

class LSKNet(nn.Module):

def __init__(self, img_size=224, in_chans=3, embed_dims=[64, 128, 256, 512],

mlp_ratios=[8, 8, 4, 4], drop_rate=0., drop_path_rate=0., norm_layer=partial(nn.LayerNorm, eps=1e-6),

depths=[3, 4, 6, 3], num_stages=4,

norm_cfg=None):

super().__init__()

self.depths = depths

self.num_stages = num_stages

dpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth decay rule

cur = 0

for i in range(num_stages):

patch_embed = OverlapPatchEmbed(img_size=img_size if i == 0 else img_size // (2 ** (i + 1)),

patch_size=7 if i == 0 else 3,

stride=4 if i == 0 else 2,

in_chans=in_chans if i == 0 else embed_dims[i - 1],

embed_dim=embed_dims[i], norm_cfg=norm_cfg)

block = nn.ModuleList([Block(

dim=embed_dims[i], mlp_ratio=mlp_ratios[i], drop=drop_rate, drop_path=dpr[cur + j],norm_cfg=norm_cfg)

for j in range(depths[i])])

norm = norm_layer(embed_dims[i])

cur += depths[i]

setattr(self, f"patch_embed{i + 1}", patch_embed)

setattr(self, f"block{i + 1}", block)

setattr(self, f"norm{i + 1}", norm)

self.channel = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

def forward(self, x):

B = x.shape[0]

outs = []

for i in range(self.num_stages):

patch_embed = getattr(self, f"patch_embed{i + 1}")

block = getattr(self, f"block{i + 1}")

norm = getattr(self, f"norm{i + 1}")

x, H, W = patch_embed(x)

for blk in block:

x = blk(x)

x = x.flatten(2).transpose(1, 2)

x = norm(x)

x = x.reshape(B, H, W, -1).permute(0, 3, 1, 2).contiguous()

outs.append(x)

return outs

class DWConv(nn.Module):

def __init__(self, dim=768):

super(DWConv, self).__init__()

self.dwconv = nn.Conv2d(dim, dim, 3, 1, 1, bias=True, groups=dim)

def forward(self, x):

x = self.dwconv(x)

return x

def update_weight(model_dict, weight_dict):

idx, temp_dict = 0, {}

for k, v in weight_dict.items():

if k in model_dict.keys() and np.shape(model_dict[k]) == np.shape(v):

temp_dict[k] = v

idx += 1

model_dict.update(temp_dict)

print(f'loading weights... {idx}/{len(model_dict)} items')

return model_dict

def lsknet_t(weights=''):

model = LSKNet(embed_dims=[32, 64, 160, 256], depths=[3, 3, 5, 2], drop_rate=0.1, drop_path_rate=0.1)

if weights:

model.load_state_dict(update_weight(model.state_dict(), torch.load(weights)['state_dict']))

return model

def lsknet_s(weights=''):

model = LSKNet(embed_dims=[64, 128, 256, 512], depths=[2, 2, 4, 2], drop_rate=0.1, drop_path_rate=0.1)

if weights:

model.load_state_dict(update_weight(model.state_dict(), torch.load(weights)['state_dict']))

return model

if __name__ == '__main__':

model = lsknet_t('lsk_t_backbone-2ef8a593.pth')

inputs = torch.randn((1, 3, 640, 640))

for i in model(inputs):

print(i.size())

yolo.py文件修改

def parse_model(d, ch): # model_dict, input_channels(3)

# Parse a YOLOv5 model.yaml dictionary

LOGGER.info(f"\n{'':>3}{'from':>18}{'n':>3}{'params':>10} {'module':<40}{'arguments':<30}")

anchors, nc, gd, gw, act = d['anchors'], d['nc'], d['depth_multiple'], d['width_multiple'], d.get('activation')

if act:

Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU()

LOGGER.info(f"{colorstr('activation:')} {act}") # print

na = (len(anchors[0]) // 2) if isinstance(anchors, list) else anchors # number of anchors

no = na * (nc + 5) # number of outputs = anchors * (classes + 5)

is_backbone = False

layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out

for i, (f, n, m, args) in enumerate(d['backbone'] + d['head']): # from, number, module, args

try:

t = m

m = eval(m) if isinstance(m, str) else m # eval strings

except:

pass

for j, a in enumerate(args):

with contextlib.suppress(NameError):

try:

args[j] = eval(a) if isinstance(a, str) else a # eval strings

except:

args[j] = a

n = n_ = max(round(n * gd), 1) if n > 1 else n # depth gain

if m in {

Conv, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, MixConv2d, Focus, CrossConv,

BottleneckCSP, C3, C3TR, C3SPP, C3Ghost, nn.ConvTranspose2d, DWConvTranspose2d, C3x}:

c1, c2 = ch[f], args[0]

if c2 != no: # if not output

c2 = make_divisible(c2 * gw, 8)

args = [c1, c2, *args[1:]]

if m in {BottleneckCSP, C3, C3TR, C3Ghost, C3x}:

args.insert(2, n) # number of repeats

n = 1

elif m is nn.BatchNorm2d:

args = [ch[f]]

elif m is Concat:

c2 = sum(ch[x] for x in f)

# TODO: channel, gw, gd

elif m in {Detect, Segment}:

args.append([ch[x] for x in f])

if isinstance(args[1], int): # number of anchors

args[1] = [list(range(args[1] * 2))] * len(f)

if m is Segment:

args[3] = make_divisible(args[3] * gw, 8)

elif m is Contract:

c2 = ch[f] * args[0] ** 2

elif m is Expand:

c2 = ch[f] // args[0] ** 2

elif isinstance(m, str):

t = m

m = timm.create_model(m, pretrained=args[0], features_only=True)

c2 = m.feature_info.channels()

# elif m in {}:

# m = m(*args)

# c2 = m.channel

else:

c2 = ch[f]

if isinstance(c2, list):

is_backbone = True

m_ = m

m_.backbone = True

else:

m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module

t = str(m)[8:-2].replace('__main__.', '') # module type

np = sum(x.numel() for x in m_.parameters()) # number params

m_.i, m_.f, m_.type, m_.np = i + 4 if is_backbone else i, f, t, np # attach index, 'from' index, type, number params

LOGGER.info(f'{i:>3}{str(f):>18}{n_:>3}{np:10.0f} {t:<40}{str(args):<30}') # print

save.extend(x % (i + 4 if is_backbone else i) for x in ([f] if isinstance(f, int) else f) if x != -1) # append to savelist

layers.append(m_)

if i == 0:

ch = []

if isinstance(c2, list):

ch.extend(c2)

for _ in range(5 - len(ch)):

ch.insert(0, 0)

else:

ch.append(c2)

return nn.Sequential(*layers), sorted(save)

def _forward_once(self, x, profile=False, visualize=False):

y, dt = [], [] # outputs

for m in self.model:

if m.f != -1: # if not from previous layer

x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

if profile:

self._profile_one_layer(m, x, dt)

if hasattr(m, 'backbone'):

x = m(x)

for _ in range(5 - len(x)):

x.insert(0, None)

for i_idx, i in enumerate(x):

if i_idx in self.save:

y.append(i)

else:

y.append(None)

x = x[-1]

else:

x = m(x) # run

y.append(x if m.i in self.save else None) # save output

if visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)

return x

yaml文件修改

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.25 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# 0-P1/2

# 1-P2/4

# 2-P3/8

# 3-P4/16

# 4-P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, vovnet39a, [False]], # 4

[-1, 1, SPPF, [1024, 5]], # 5

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]], # 6

[-1, 1, nn.Upsample, [None, 2, 'nearest']], # 7

[[-1, 3], 1, Concat, [1]], # cat backbone P4 8

[-1, 3, C3, [512, False]], # 9

[-1, 1, Conv, [256, 1, 1]], # 10

[-1, 1, nn.Upsample, [None, 2, 'nearest']], # 11

[[-1, 2], 1, Concat, [1]], # cat backbone P3 12

[-1, 3, C3, [256, False]], # 13 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]], # 14

[[-1, 10], 1, Concat, [1]], # cat head P4 15

[-1, 3, C3, [512, False]], # 16 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]], # 17

[[-1, 5], 1, Concat, [1]], # cat head P5 18

[-1, 3, C3, [1024, False]], # 19 (P5/32-large)

[[13, 16, 19], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

- 上一篇:Redis代码实践总结(一)

- 下一篇:计算机网络相关知识点

- 程序开发学习排行

- 最近发表